Architecture¶

This page provides a high-level overview of how NautobotGPT processes your queries and delivers responses.

How NautobotGPT Works¶

NautobotGPT enhances the capabilities of Large Language Models (LLMs) by injecting proprietary Nautobot knowledge, data, and information using a technique called Retrieval-Augmented Generation (RAG). This is what makes it uniquely powerful for assisting with Nautobot — it combines the broad capabilities of modern LLMs with deep, curated domain expertise from Network to Code's Nautobot experts, repositories, and knowledgebase.

When you submit a prompt, NautobotGPT analyzes your intent and responds using:

- Enriched context from Network to Code's internal documents, knowledgebase, repositories, and other curated, proprietary Nautobot data

- Native model knowledge from the underlying LLM

This means NautobotGPT is not just a general-purpose AI — it understands Nautobot deeply and delivers relevant, actionable guidance informed by real-world experience.

Multi-Agent¶

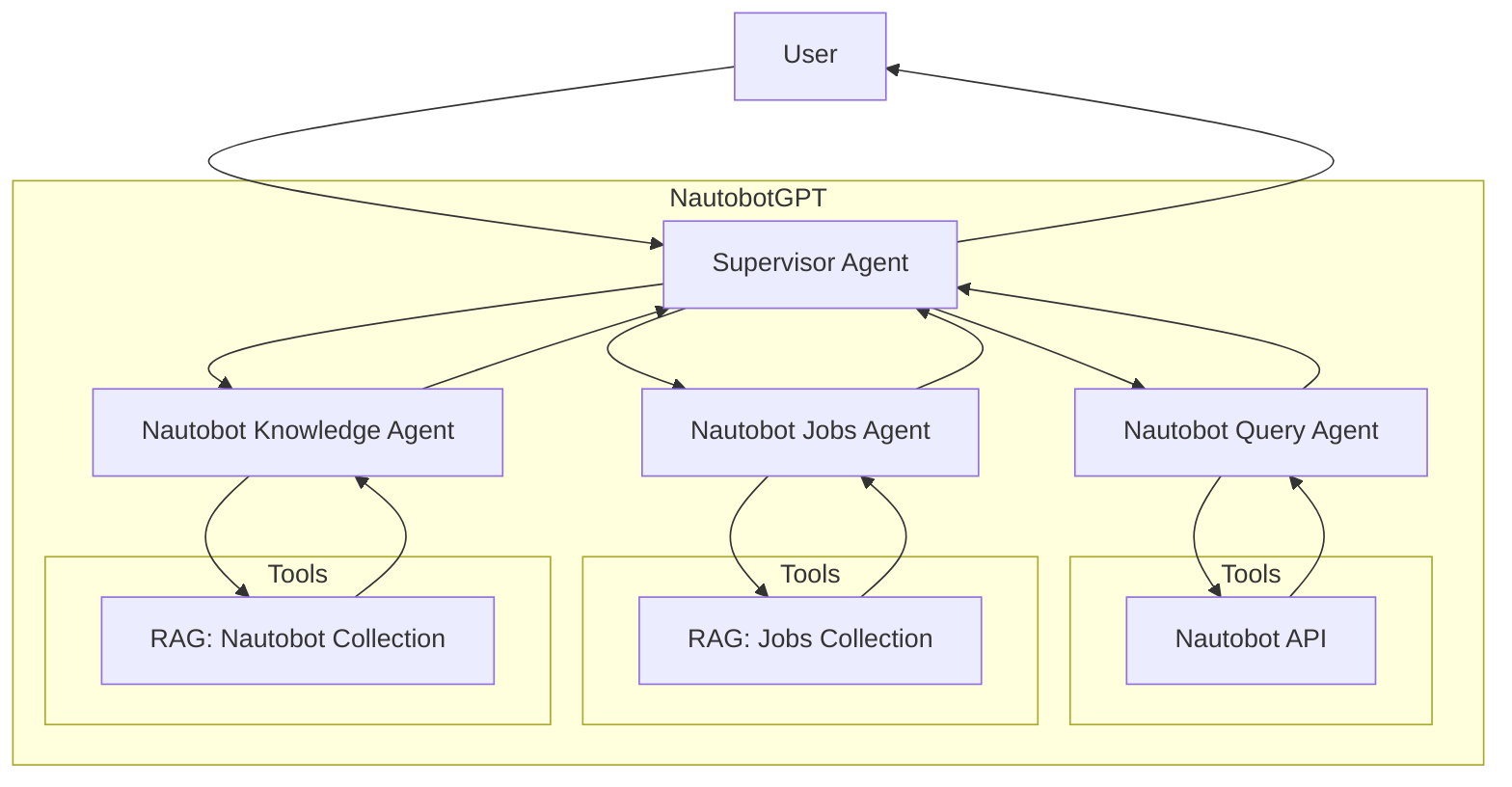

NautobotGPT uses a multi-agent architecture where a Supervisor Agent intelligently routes your query to the most appropriate specialized agent:

-

Nautobot Knowledge Agent — Handles general questions about Nautobot concepts, data models, configuration, best practices, and troubleshooting. This agent draws from curated Nautobot documentation and expert knowledge.

-

Nautobot Jobs Agent — Specializes in helping you write, debug, migrate, and understand Nautobot Jobs. This agent has access to a dedicated collection of Jobs-related documentation and code patterns.

-

Nautobot Query Agent — Connects directly to your Nautobot instance to query live data. This agent can fetch data and execute queries against your Nautobot. See Connecting to Nautobot for setup instructions.

The Supervisor Agent determines which specialized agent (or combination of agents) is best suited to answer your question, gathers the results, and composes a final response.

Conversation Flow¶

The following diagram illustrates how a typical conversation flows through NautobotGPT. Depending on your query, the Supervisor Agent delegates to one or more specialized agents, each of which uses its own tools and knowledge sources to build a comprehensive response.

sequenceDiagram

actor User

participant NautobotGPT

participant Supervisor Agent

participant Nautobot Query Agent

participant Nautobot

participant Nautobot Jobs Agent

participant Jobs Collection

participant Nautobot Knowledge Agent

participant Nautobot Collection

User->>NautobotGPT: Sends query

NautobotGPT->>Supervisor Agent: Forwards query to LLM

Supervisor Agent->>Supervisor Agent: Processes query

alt Query related to Nautobot data

Supervisor Agent->>Nautobot Query Agent: Calls other agent

Nautobot Query Agent->>Nautobot Query Agent: Processes query

Nautobot Query Agent->>Nautobot: Sends API request

Nautobot-->>Nautobot Query Agent: Returns API response

Nautobot Query Agent->>Nautobot Query Agent: Generates response with context

Nautobot Query Agent-->>Supervisor Agent: Provides additional context

Supervisor Agent->>Supervisor Agent: Generates response with context

end

alt Query related to Nautobot Jobs

Supervisor Agent->>Nautobot Jobs Agent: Calls other agent

Nautobot Jobs Agent->>Nautobot Jobs Agent: Processes query

Nautobot Jobs Agent->>Jobs Collection: RAG semantic search

Jobs Collection-->>Nautobot Jobs Agent: Returns relevant docs

Nautobot Jobs Agent->>Nautobot Jobs Agent: Generates response with context

Nautobot Jobs Agent-->>Supervisor Agent: Provides additional context

Supervisor Agent->>Supervisor Agent: Generates response with context

end

alt Query related to Nautobot

Supervisor Agent->>Nautobot Knowledge Agent: Calls other agent

Nautobot Knowledge Agent->>Nautobot Knowledge Agent: Processes query

Nautobot Knowledge Agent->>Nautobot Collection: RAG semantic search

Nautobot Collection-->>Nautobot Knowledge Agent: Returns relevant docs

Nautobot Knowledge Agent->>Nautobot Knowledge Agent: Generates response with context

Nautobot Knowledge Agent-->>Supervisor Agent: Provides additional context

Supervisor Agent->>Supervisor Agent: Generates response with context

end

Supervisor Agent-->>NautobotGPT: Returns response

NautobotGPT-->>User: Displays LLM responseDeployment¶

NautobotGPT is provisioned exclusively through the Nautobot Cloud Console. Each customer receives their own isolated NautobotGPT instance. This instance allows an unlimited number of users and is fully provisioned and managed by Network to Code.

This deployment architecture ensures:

- Single-tenant isolation — Your instance is completely separate from other customers

- Secure AWS-native deployment — Protected by an AWS Web Application Firewall (WAF) enforcing security controls and filtering traffic per industry best practices

- Simplified access and management — Managed through the Nautobot Cloud Console

Security & Privacy¶

Security and privacy are foundational to NautobotGPT's architecture:

- Access Controls — Access is managed through local user accounts created and authenticated within your deployed environment. Each user logs in with unique credentials via the web interface.

- Data Handling — All messages and inputs are kept confidential within your deployed environment. Chat histories are stored locally and are only visible to the individual user — never to other customers or external parties.

- Model Privacy — Your data, chats, and conversations are never used to train NautobotGPT or any of the underlying models.

- Tenant Isolation — Each customer is assigned a dedicated project and API key with the LLM provider, ensuring all interactions are logically isolated and segmented to only your organization.

For more information on Security & Privacy, see the FAQ.